definity Expands Support to Spark Streaming

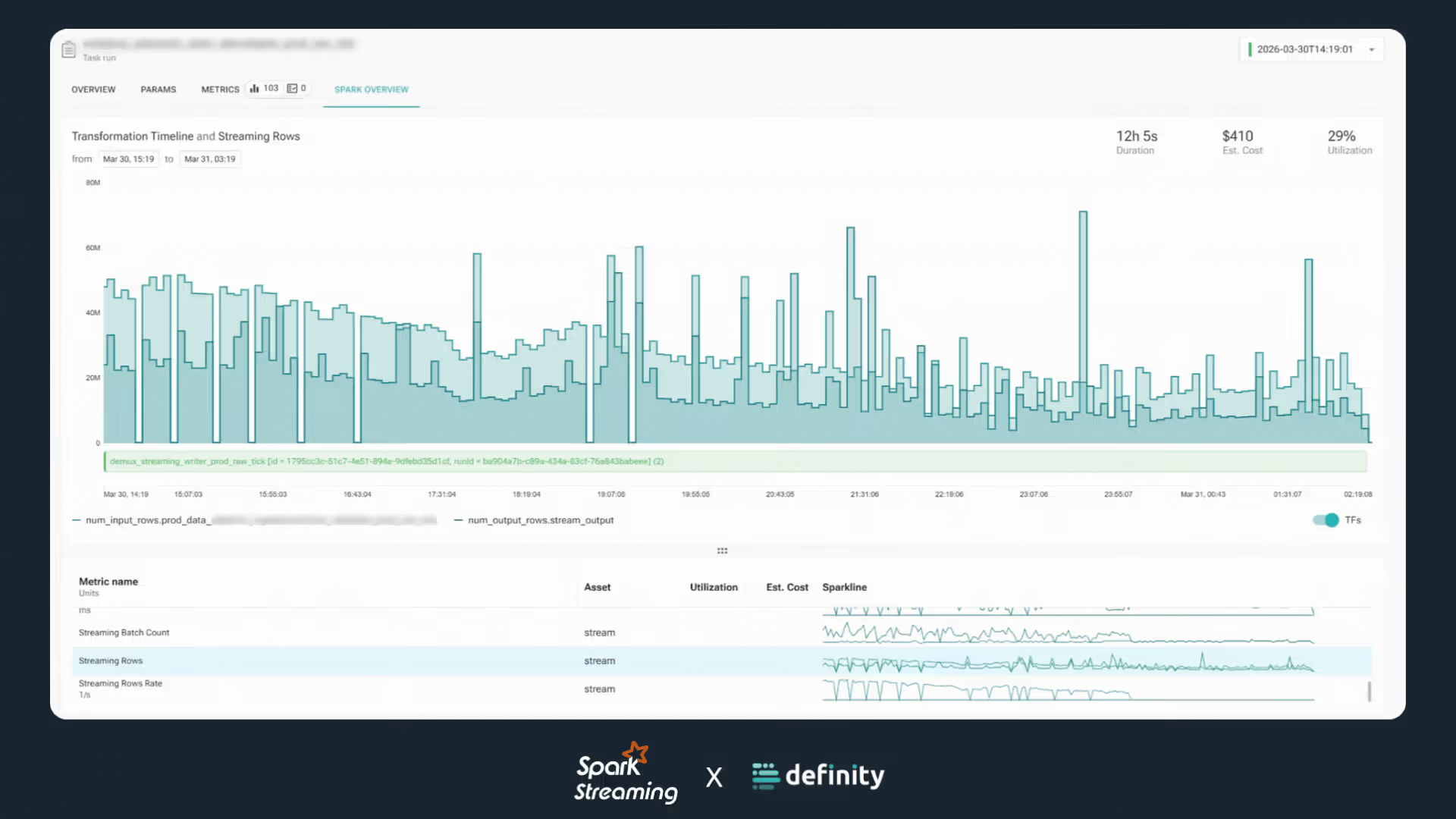

Definity brings real-time, execution-level observability and optimization to Spark Streaming, enabling continuous data pipelines to be cost-efficient, reliable, and interpretable by AI-driven agentic systems

Data Engineers can now apply the same in-motion observability and cost optimization rigor to their always-on workloads.

The Hidden Complexity of Spark Streaming

Streaming pipelines introduce a different class of operational challenges. Because they never truly complete, traditional monitoring patterns break down.

In batch jobs, engineers can inspect execution after the fact. In streaming systems, by the time a problem is visible, it may have already impacted users or downstream systems.

Resources remain allocated even when utilization drops. Executors linger. Costs accumulate quietly. Latency drifts. Data freshness degrades gradually rather than failing loudly.

The result is subtle inefficiency and fragile reliability.

Bringing In-Motion Observability to Streaming

definity extends full-stack, in-motion observability into Spark Streaming environments.

Instead of analyzing exported metrics after the fact, teams gain visibility directly at the job execution layer while the pipeline is running. Infrastructure behavior, cost signals, performance characteristics, and data health indicators are observed continuously.

This applies across event ingestion, CDC pipelines, IoT streams, log processing, fraud detection, personalization systems, and operational dashboards.

Streaming workloads are no longer opaque. They become inspectable in real time.

Building the foundations for Agentic Data Engineering

Agentic systems require deep runtime context. They need to understand executor behavior, resource allocation, lineage, performance bottlenecks, and data health in real time in order to recommend or even take corrective actions.

Traditional monitoring stacks were designed for dashboards and alerts. They were not designed to power intelligent agents operating inside live pipelines.

By extending into Spark Streaming, definity provides the runtime intelligence layer that agentic data engineering depends on. Continuous pipelines become not just monitored, but interpretable by AI systems capable of root-causing issues, optimizing performance, and preventing incidents before they escalate.

Strengthening the Lakehouse for Real-Time Workloads

Spark Streaming support expands definity’s coverage across the Lakehouse ecosystem.

Batch and streaming workloads can now be optimized under a unified execution-level model, improving reliability, cost efficiency, and trust across always-on data products.

With Spark Streaming support, definity brings execution-level visibility, optimization, and AI readiness to continuous data systems. Streaming workloads are finally observable, optimized, and ready for the agentic future.